Your analytics stack is dying. Here's what's going to replace it

The monolithic martech stack is collapsing. An open source, warehouse-first intelligence platform is cheaper, more honest, and actually yours.

As a marketing team's maturity evolves, disparate data sources centralise, increasingly sophisticated SQL queries begin to pull together insights and the stars align. In time data becomes central to all they do and they rely heavily on accurate and timely data to make informed decisions that drive campaign success and maximise customer engagement. All is well until…BOOM, something breaks, critical reporting is not functioning as expected, stakeholders across the business are beginning to lose the trust in the data you worked so hard to instil. The culprit? Outdated SQL operations and stacked scheduled queries.

Bottlenecks in knowledge, a lack of any sort of dev/prod workflow and no versioning unfortunately meant this was only a matter of time, it was a ticking time bomb...

Stacked scheduled queries involve a series of dependent SQL queries that run on a set schedule, each building on the results of the previous one. Any updates or edits are often made directly on live 'data products,' adding to the risk of failure. On top of this, these workflows often lack robust version control (with maybe some good eggs manually backing up SQL in a git repo). This makes it challenging to track changes or allow multiple team members to work concurrently without conflicts. To be clear here this approach is fine, up until a point. If you have one or two data sources and a couple of data models built on top, unpicking that basic tapestry should not be too much of a challenge should things go wrong. I will add a caveat to my caveat here though, if you can build your processes using Dataform from the off, definitely do so as it saves you a rebuild down the line.

To summarise, some of the main issues with basic SQL approaches:

You might think, so what. If it breaks I know where the problem will be, I built this masterpiece! But there are costs that you may not initially consider.

It may take time to fix issues, delaying decisions and potentially having big impacts on how agile you can be as a business. What if an error in reporting is not obvious, something somewhere in the stack has gone wrong but it has not broken the downstream reporting. You may well be taking those errors into account in budgeting, forecasting, spending and compliance decisions.

Anyone who has worked with stakeholders and data knows how hard fought buy-in and trust is when it comes to data. One undetected issue or misinterpreted value could well undo all the work undertaken to instill that trust.

Should an problem arise there is the sticky issue of fixing it. Digging through long complex SQL statements chained together is a lengthy and difficult process, especially if you did not originally put that SQL together. Over the lifetime of a solution many many hours will be dedicated to trying to understand the SQL before a fix can even be undertaken. This is to not even mention all the repeated pieces of SQL being written over and over by individuals working in a silos.

To prevent these issues, organisations need to adopt modern data engineering platforms like Dataform or its older brother dbt (I think this small blog by dbt does the best job of explaining why these tools were created), both of which are particularly suited to managing marketing data operations. The choice of tool can depend on your current data location. Dataform is tightly integrated with BigQuery and is free, making it an ideal choice if most of your data is already centralised there. Being Google Cloud partners, Dataform is where we spend a lot of our time.

We build intelligence platforms on BigQuery, Dataform and Google Cloud — from setup to ongoing optimisation.

So how does the adoption of Dataform help with the fragility and the costs of outdated SQL processes?

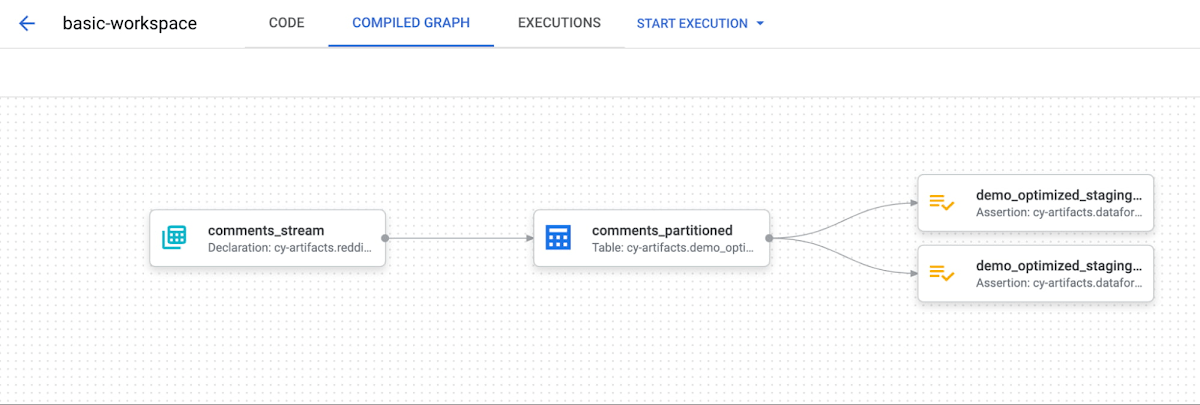

Dataform automatically manages dependencies between datasets and queries. This ensures that if one dataset changes, all dependent datasets are updated accordingly, eliminating single points of failure. Additions like compiled graphs make it super easy to see and manage dependencies.

By integrating with version control systems like Git, Dataform allows teams to track changes, collaborate effectively, and roll back to previous versions if necessary. It also allows the team to work on development versions of code bases without fear of breaking production level data products.

Dataform enables teams to include tests within the data workflow. This ensures data quality by validating datasets at each stage of the pipeline. These are called assertions and can be configured to inform you of issues, or stop a pipeline altogether.

Designed to handle large-scale data operations, Dataform optimises query execution and resource usage, allowing your data infrastructure to grow with your marketing data needs.

With a clear visual representation of data workflows, Dataform makes it easier to understand and troubleshoot the data pipeline, reducing downtime and increasing efficiency. Dataform also moves you to a modular approach to SQL, making it much simpler to understand and update when necessary.

Dataform supports the use of JavaScript (JS), providing additional flexibility to transform data beyond the capabilities of standard SQL. The ability to use JS variables and functions for repeated operations makes it easier to create reusable code, reducing redundancy and saving time. This utility allows for more dynamic transformations and custom logic, giving teams greater power to tailor their data workflows to specific requirements. If you so wished you could write your entire data modelling processes in JS!

Transitioning to a modern data engineering platform might seem challenging, but the long-term benefits are well worth the effort. Here are a few things to consider to get you started

The risks of relying on outdated SQL operations are too big to ignore. Continuing to use stacked scheduled queries means taking unnecessary risks with your data integrity and marketing success. Adopting modern data engineering platforms like Dataform is not just a technical upgrade—it's a strategic move that can protect your organisation's future.

Measurelab's advice? Take action now to modernise your SQL operations and build a strong, reliable data infrastructure that supports your marketing success. Reach out to Measurelab who can help with any Dataform questions or projects you may have in mind!

We build intelligence platforms on BigQuery, Dataform and Google Cloud — from setup to ongoing optimisation.

Take our short assessment to find out where your data stack stands and what to prioritise next.

The monolithic martech stack is collapsing. An open source, warehouse-first intelligence platform is cheaper, more honest, and actually yours.

UK universities face a deficit crisis. Student Recruitment Intelligence can transform Clearing from chaos to precision.

In this 30-minute session, we unpacked what we had been experimenting with using Google’s conversational AI to spend less time wrangling data and more time actually learning from it.