Clearing 2026: why UK universities need recruitment intelligence

UK universities face a deficit crisis. Student Recruitment Intelligence can transform Clearing from chaos to precision.

Every Google BigQuery code you run costs money – the amount you pay can vary hugely based on different factors. Although the amount may be minimal, as your dataset increases, so will your costs. Let’s have a look at the various ways you can limit the number of records retrieved through your code, some that save you money and some that don’t.

Some of the biggest costs you can incur is while you’re busy developing code for a project. It's all too easy to write code and click run before truly thinking about how much that code will cost. Luckily, we have some scripts and processes you can use to help reduce the BigQuery cost of data processing.

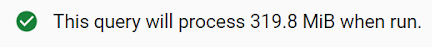

BigQuery has a great little nugget of information in the top right corner of your script. It basically gives you a rough estimate of how much data your code is going to process when you click the run button. It looks something like this:

As you change your code, you will see this number go up and down accordingly. We tend to start questioning code once it has gone above 15GB. Sometimes this could be valid due to large datasets, but it’s always worth checking your code a couple of times to see if there is anywhere you could make a saving.

"I know what I’ll do, I’ll just add LIMIT to the bottom of my SQL and just get the first 1,000 records out – 1,000 records can’t cost a lot of money, right?"

Wrong! By the time you come to the limit section of your code, BigQuery has already processed all the data to get the information you are seeing. That processing cost is all yours my friend. It’s like asking someone to bake a cake so that you can just have a slice of it…effort has still been spent making the whole cake.

Limit is a great way of seeing just a few records, but doesn't have an impact on the processing costs. If you are used to using limits to get an example of the data, I’d recommend opening the table you want to look at and then selecting “Preview”.

We build intelligence platforms on BigQuery, Dataform and Google Cloud — from setup to ongoing optimisation.

Unlike some other SQL products, BigQuery reads data column by column, not row by row. This means that if you limit the number of columns you're pulling data from, it will dramatically affect the amount of bytes it takes to process the information (and the cost).

The key rule here is do not use SELECT * (like ever). Instead, think about the key pieces of information you actually want to see and don't forget that you can review the table for free and see what is available.

Another great way to reduce the number of bytes used to process a query is to ensure key columns are partitioned and/or clustered. This has to be done at the point the table is created (a workaround could be to create a table from existing tables’ data and add the partitioning and clustering). This will allow BigQuery to organise your data based on certain columns; this way, when you limit by those columns, the bytes used will reduce significantly.

On the whole, sampling a table is a fabulous option to allow you to play with a random selection of data while you’re developing code. The amount of data you're selecting is completely up to you – you can stipulate the percentage of data you would like to look at.

If you apply a table sample to the table you are querying, then you will only be querying a random sample from that data. The keyword here is ‘random’. Be warned: each time you run the code, it will be a different random sample, so your data will never match a prior extract (this can cause problems if you are trying to reconcile data). The code looks like this:

SELECT * FROM dataset.my_table TABLESAMPLE SYSTEM (10 PERCENT)If you’re in the process of developing code and know you’re going to run sections of your code to test as you go, I would recommend creating a staging table from your dataset using a table sample. This way, you can develop to your heart’s content knowing you are querying the table using a fraction of the bytes.

The data won’t be correct (as it’s not all there), but it will enable you to write code at a fraction of the cost. There are some limitations to the table sample code to bear in mind, however:

We build intelligence platforms on BigQuery, Dataform and Google Cloud — from setup to ongoing optimisation.

Take our short assessment to find out where your data stack stands and what to prioritise next.

UK universities face a deficit crisis. Student Recruitment Intelligence can transform Clearing from chaos to precision.

Dara and Matthew recap 2025 with 12 top highlights, AI, analytics, and the best moments on The Measure Pod.

AI expert Daniel Hulme, founder of Satalia, shares his journey from UCL PhD to entrepreneur, discussing AI, consciousness & deep tech.